Responsible Intelligence: Engineering Ethical EdTech Infrastructures

The Shift Towards Responsible Digital Learning Ecosystems and AI Ethics in Education

Rise of ethical AI in education marks a watershed event in how digital learning systems are designed, deployed, and trusted. EdTech infrastructure is not only about isolated digital tools, but also about building responsible digital learning ecosystems. Here, innovation is balanced with accountability. As AI becomes essential to classrooms, corporate training, and hybrid learning environments, the demand for infrastructures to be engineered with AI ethics in digital learning, learning data privacy, and human‑centred EdTech at their core becomes more important.

Education is no longer shaped by isolated tools or standalone platforms; it is increasingly defined by interconnected systems that learn, adapt, and evolve. What was once a fragmented digital landscape has transformed into responsible digital learning ecosystems, where technologies interact seamlessly to create continuous, data-driven learning experiences. At the core of this transformation lies AI ethics in digital learning, which is no longer a peripheral concern but a defining feature of modern EdTech infrastructure.

AI as the Backbone of Modern Education Systems

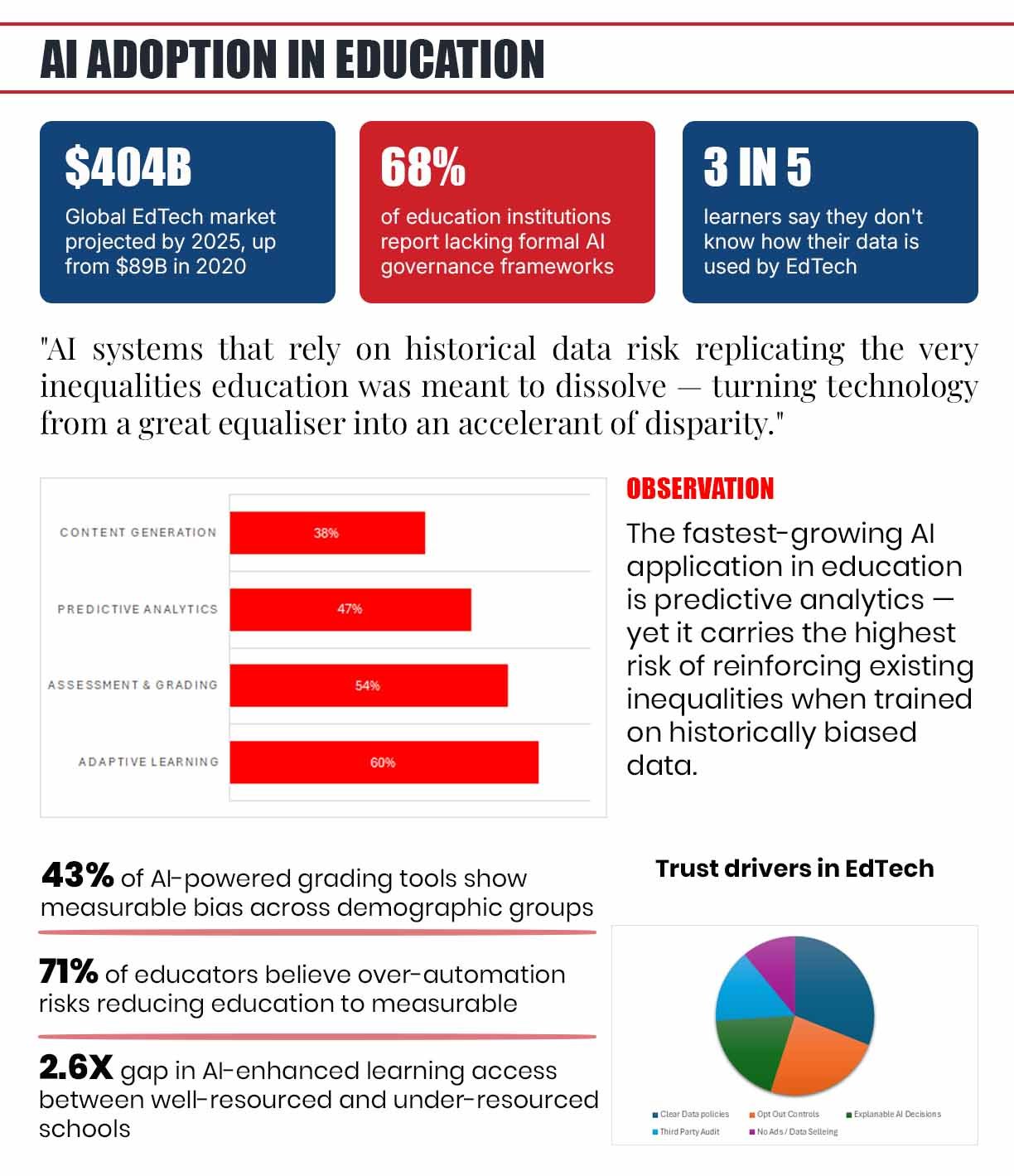

Artificial intelligence has moved from being an experimental addition to becoming the backbone of educational platforms. From adaptive learning paths and intelligent tutoring systems to predictive analytics that identify student performance trends, AI is actively shaping how knowledge is delivered and assessed. This shift marks a transition from passive technology to active participation, where systems not only support learning but influence it in real time.

From Innovation to Responsibility: The Rise of Responsible AI in Education

However, this evolution brings a fundamental shift in responsibility. As institutions increasingly rely on AI to guide educational outcomes, the conversation must extend beyond innovation and efficiency. It must address the deeper question of how these systems are designed and governed. The growing emphasis on how to build ethical AI systems in education reflects this urgency, highlighting the need for infrastructures that align technological advancement with ethical accountability.

The expansion of EdTech during the recent years, particularly in response to global disruptions such as the pandemic, has accelerated the integration of AI into mainstream education. Platforms that were once supplementary are now central to institutional frameworks. This rapid scaling has exposed critical vulnerabilities, particularly around learning data privacy and transparency. As a result, responsible intelligence has emerged not as a theoretical ideal but as a practical necessity.

In this new landscape, organizations are beginning to recognize that intelligence without responsibility is unsustainable. The future of education depends not only on how advanced systems become, but on how responsibly they are built and deployed. Innovation has long been the driving force behind EdTech’s growth, enabling rapid experimentation and expansion. Yet, as systems become more complex and influential, innovation alone is no longer sufficient. The focus is shifting toward responsible AI in learning, where accountability becomes as important as capability.

Key Ethical Challenges in AI-Driven Education Technology

AI Bias in Education Technology and Its Impact on Equity

One of the most pressing concerns in this space is AI bias in education technology. AI systems rely heavily on historical data, and when that data reflects existing inequalities, the outcomes produced by these systems can reinforce those same disparities. This introduces one of the most significant ethical challenges in AI-driven education, where technology that is intended to democratize learning may inadvertently deepen existing divides. At the same time, the increasing reliance on automation raises questions about the role of human judgment in education. AI can efficiently handle tasks such as grading, content recommendation, and performance tracking. However, education is not purely transactional. It involves interpretation, mentorship, and emotional intelligence—dimensions that cannot be fully replicated by algorithms. The risk of over-automation lies in reducing learning to measurable outputs, neglecting the qualitative aspects that define meaningful education.

Over-Automation vs Human Judgment in Learning Systems

The challenges of AI adoption in classrooms are also closely tied to issues of access and equity. While some institutions are able to implement advanced AI-powered systems, others struggle with limited resources and infrastructure. This disparity creates uneven learning environments, where the benefits of technological advancement are not equally distributed. Without deliberate intervention, EdTech risks amplifying inequality rather than addressing it.

Transparency and Trust in AI-Powered Learning Platforms

Transparency presents another layer of complexity. Many AI systems function as opaque mechanisms, making decisions without clear explanations. In an educational context, this lack of clarity can undermine confidence among students and educators alike. When users do not understand how outcomes are determined, trust becomes difficult to sustain.

Building Ethical AI Systems in Education

For organizations, addressing these challenges is not merely about compliance; it is about credibility. Integrating AI ethics in digital learning into core operations allows institutions to build systems that are not only effective but also trustworthy. Accountability, therefore, becomes a strategic asset, enabling long-term growth and adoption. Creating ethical systems requires a fundamental shift in how technology is designed. Rather than treating ethics as an afterthought, organizations must embed it into the very architecture of EdTech infrastructure. This approach, often described as ethics-by-design, ensures that ethical considerations are integrated at every stage of development. Central to this process is AI governance in education, which provides the frameworks necessary to manage risks and ensure accountability. Governance is no longer limited to regulatory compliance; it has become a comprehensive strategy that encompasses data management, algorithmic transparency, and operational oversight. The role of governance in digital learning systems is particularly critical in defining how data is collected, processed, and utilized.

Balancing Flexibility and Compliance in EdTech Systems

Effective governance requires a balance between structure and flexibility. While standards and policies provide essential guidance, they must also adapt to the evolving nature of technology. This dynamic approach enables organizations to respond to emerging challenges while maintaining consistency in ethical practices.

The integration of security, privacy, and transparency is another defining feature of ethical infrastructure. These elements cannot function in isolation; they must be woven together to create systems that are both robust and trustworthy. Secure education technology systems are not simply those that protect data from breaches, but those that ensure data is used responsibly and transparently. Engineering such systems also demands collaboration across disciplines. Technologists, educators, policymakers, and business leaders must work together to align technological capabilities with educational goals. This collaborative approach ensures that systems are not only technically sound but also pedagogically relevant.

As EdTech continues to scale, the importance of ethical infrastructure will only increase. Organizations that prioritize responsible design will be better positioned to navigate regulatory landscapes, build user trust, and sustain long-term growth.

Learning Data Privacy and Trust in EdTech Platforms

Data is the foundation of modern education technology, enabling platforms to personalize learning and optimize outcomes. Yet, this reliance on data also introduces significant challenges, particularly around learning data privacy. As platforms collect vast amounts of information, the question of who owns and controls this data becomes increasingly complex. One of the most critical data privacy issues in EdTech platforms is the lack of transparency in data practices. Users are often unaware of the extent to which their data is collected and analyzed. This lack of clarity creates a disconnect between platform capabilities and user expectations, leading to a potential erosion of trust.

The growing dependence on cloud-based infrastructure further complicates this landscape. While cloud systems provide scalability and accessibility, they also introduce vulnerabilities related to data security and third-party access. Ensuring the integrity of data within these environments requires continuous monitoring and robust safeguards.

Building secure education technology systems involves more than implementing technical solutions. It requires a comprehensive approach that combines technological measures with ethical considerations. Security protocols must be complemented by clear policies that define how data is handled and protected.

Trust as a Driver of Adoption in EdTech

Trust plays a central role in this ecosystem. Trust in EdTech platforms is not established through technology alone; it is built through consistent and transparent practices. When users feel confident that their data is handled responsibly, they are more likely to engage with the platform and benefit from its capabilities.

Organizations must therefore adopt a proactive approach to trust-building. This involves communicating openly with users, providing clear insights into system operations, and establishing mechanisms for accountability. Trust, once established, becomes a powerful driver of adoption and engagement.

In a landscape where data is both an asset and a responsibility, the ability to manage it ethically will define the success of EdTech platforms. As technology becomes increasingly sophisticated, the importance of human-centred EdTech becomes more pronounced. At its essence, education is a deeply human process, shaped by interaction, interpretation, and experience. Technology should enhance these elements, not replace them.

In AI-enabled environments, the role of educators is evolving rather than diminishing. Teachers are no longer just content providers; they are facilitators who guide how technology is integrated into the learning process. Their ability to contextualize information, provide feedback, and foster engagement remains irreplaceable. Balancing technological capabilities with pedagogical principles is essential. While AI can deliver personalized content, it cannot replicate the nuanced understanding that educators bring to the classroom. This balance ensures that learning remains holistic, addressing both cognitive and emotional dimensions.

Inclusion is another critical aspect of designing inclusive EdTech ecosystems. Ensuring that platforms are accessible to diverse learners requires thoughtful design and continuous evaluation. This includes addressing linguistic diversity, accommodating different learning styles, and ensuring accessibility for individuals with varying abilities.

The issue of AI bias in education technology also intersects with inclusion. Systems must be designed to reflect diverse perspectives and avoid reinforcing existing inequalities. This requires a commitment to diversity not only in data but also in design processes.

Human-Centred EdTech: Designing Learning Around People

Human-centred design emphasizes agency, allowing learners to take control of their educational journeys. By providing flexibility and choice, platforms can empower users to engage more deeply with content and develop critical thinking skills.

Ultimately, human-centred EdTech ensures that technology serves as a tool for empowerment rather than control. It reinforces the idea that education is not just about efficiency, but about meaningful engagement and growth. The future of education will be defined by the convergence of advanced technologies, including AI, data analytics, and immersive experiences. These innovations have the potential to transform learning into a more interactive and personalized experience. However, they also introduce new complexities that must be addressed through responsible design.

The future of responsible digital learning will be shaped by evolving regulatory frameworks and increasing awareness of ethical issues. Governments and institutions are beginning to establish guidelines that govern data usage, AI transparency, and digital rights. These regulations will play a crucial role in shaping how responsible digital learning ecosystems are developed and implemented. At the same time, the pace of technological innovation continues to accelerate. This creates a gap between what technology can achieve and what regulatory frameworks can manage. Bridging this gap requires proactive strategies that anticipate challenges and address them before they become systemic issues.

Scalability is another key consideration. As EdTech platforms expand, maintaining ethical standards becomes more complex. Systems must be designed to operate responsibly at scale, ensuring consistency in data practices, security measures, and user experience. The expectations of users are also evolving. Students, educators, and institutions are increasingly demanding platforms that are transparent, secure, and inclusive. This shift is redefining success in the EdTech industry, placing greater emphasis on trust and accountability.

The convergence of technologies also invites a rethinking of educational goals. As AI takes on more functional roles, the focus of education may shift toward skills that machines cannot easily replicate, such as critical thinking, creativity, and ethical reasoning.

The Future of Responsible Digital Learning Ecosystems

In this context, responsible intelligence becomes a guiding principle. It aligns technological innovation with human values, ensuring that progress is both meaningful and sustainable. The evolution of EdTech into intelligent, data-driven ecosystems represents a defining moment for education. As AI becomes integral to learning systems, the importance of AI ethics in digital learning, human-centred EdTech, and secure education technology systems cannot be overstated.

Responsible intelligence is not simply about mitigating risks; it is about creating systems that are designed with purpose and integrity. It reflects a commitment to building educational environments that are fair, transparent, and inclusive.For organizations, this represents both a challenge and an opportunity. Those that invest in ethical infrastructure will not only navigate the complexities of modern education but also set new standards for the industry.In the end, the goal is clear: to engineer learning ecosystems that are not only intelligent, but responsible by design.