The AI Governance Gap: Who Is Really Accountable When Algorithms Decide?

In 2023, a global financial services firm paid over €800,000 in regulatory penalties after its AI-powered loan approval system was found to have systematically disadvantaged applicants from minority postcodes: a bias that had gone undetected for nearly two years. No single person was held responsible. The model had been built by one team, deployed by another, and monitored by no one.

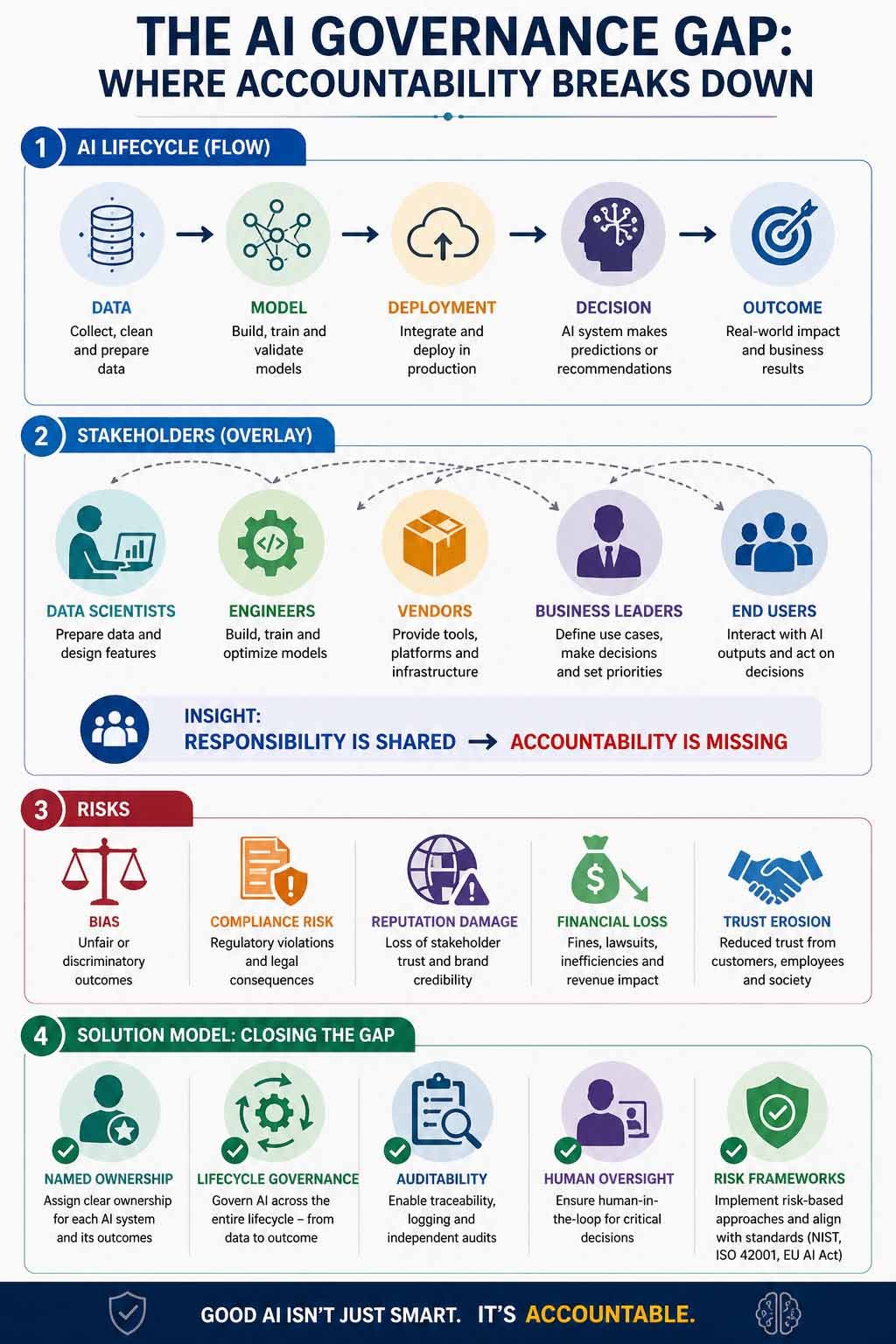

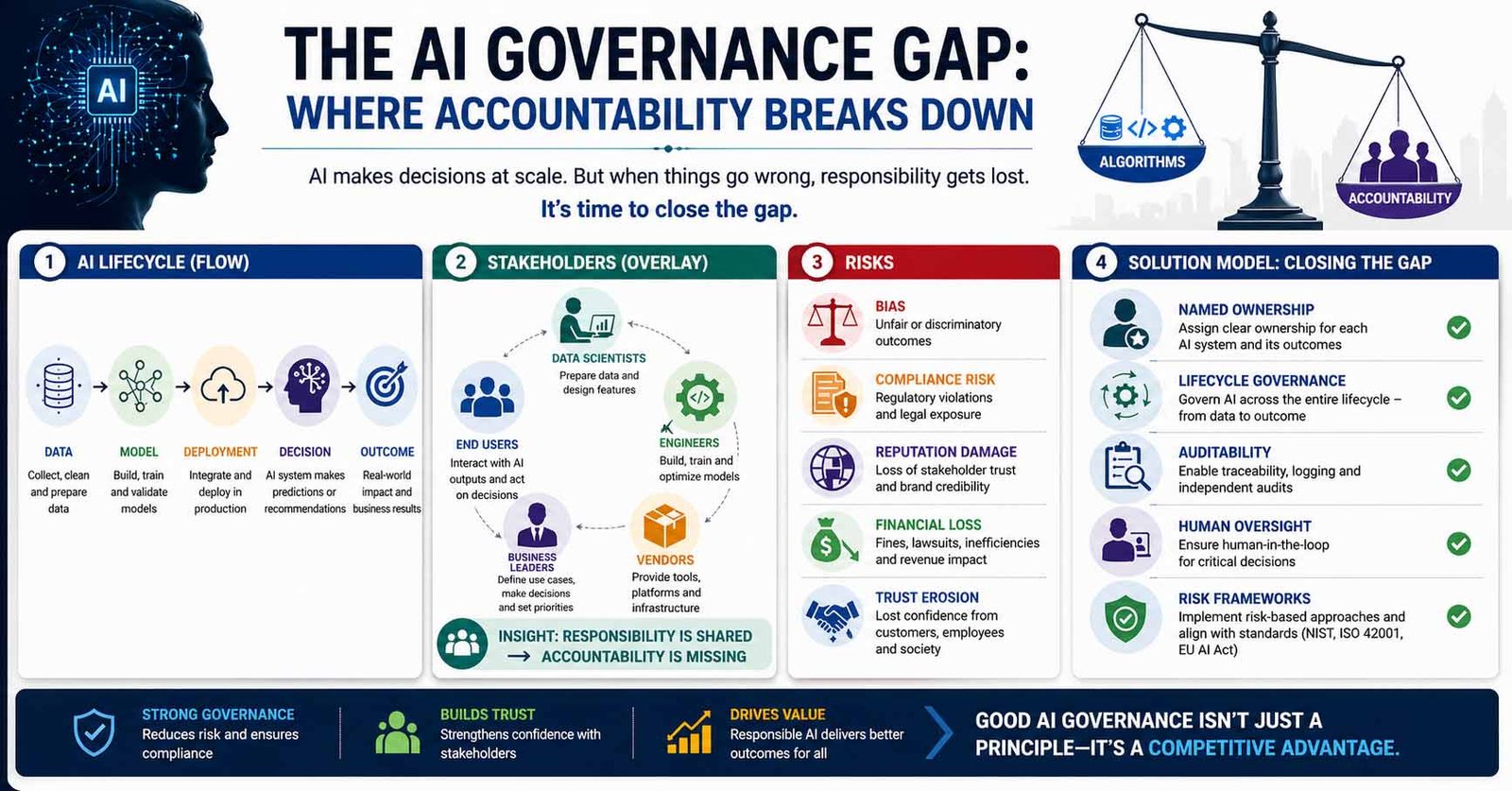

This is not an isolated incident. It is the defining governance failure of the AI era. Yet according to a 2024 survey by the IBM Institute for Business Value, only 24% of organisations reported that their AI projects are adequately governed. Across industries, AI is now making consequential decisions including the way decisions are made in recruitment, lending, health care, and corporate operations. As AI governance, AI accountability, and responsible AI become essential components of business strategy, one question has moved from boardroom conversation to regulatory mandate: who is responsible when an Responsible AI system gets it wrong? While corporations are quickly embracing technology solutions that automate the decision-making process, the AI governance system is still catching up. Even though AI technology helps organizations make the most out of their resources, there are now new challenges in terms of managing risks, improving transparency, and building trust in the decision-making process. The AI governance gap refers to the absence of clear accountability structures within organisations deploying AI systems, where responsibility is distributed across multiple stakeholders but owned by none. Traditional structures have proven to be insufficient in addressing the issue of determining responsibility, defining accountability, and monitoring the process throughout its lifecycle. Multiple stakeholders—from developers and data scientists to business leaders and vendors—play a role, but accountability remains fragmented. Accountability for AI decisions must rest with the organisation that deploys the system — not the vendor that built it, the data scientist that trained it, or the algorithm itself. In practice, this means assigning named ownership at the decision level: a designated executive or function responsible for each AI system's outcomes, risks, and compliance — before deployment, not after failure.

Why AI Accountability Has Become a Core Business Risk

With AI decision-making playing an ever more significant role, AI accountability has evolved from an ethical concern to a fundamental business risk of AI decision making for companies. Not only can organizations be affected by operational errors, but they are now facing potential threats to their reputation, regulatory pressure, and loss of trust from stakeholders due to AI-derived outcomes. From biased recruitment programs to flawed credit- scoring models, many real world instances have illustrated the negative impact of poor AI governance, resulting in undesired and sometimes detrimental consequences. This situation brings to light a crucial point: whereas AI can act at a large scale, so do the associated risks, usually faster than any countermeasures.

Governments and other regulatory authorities are now raising the bar by demanding AI transparency, accountability, and monitoring of AI systems. One of the most significant developments in AI regulation is the EU AI Act, which introduces a structured, risk-based AI governance framework. Under this model, AI systems are categorized based on their potential impact, with high-risk applications, such as those used in hiring, credit scoring, and healthcare subject to strict requirements around AI auditability, explainability, and human-in-the-loop AI oversight.

However, compliance alone does not eliminate risk. Unless there is clear responsibility for AI processes and effective AI risk management, organizations will always be susceptible to risks. In summary, accountability is becoming not just an option but rather a critical aspect of business strategy.

The AI Governance Gap: Shared Responsibility Creates Zero Accountability

The problem of AI governance is defined by a fundamental problem – diffused accountability. AI technologies are seldom owned by one organizational function. Rather, they are created by several functions:

- Data scientists creating and preparing data sets

- Engineers developing and training models

- Suppliers delivering infrastructure and algorithms

- Businesses implementing AI into decision processes

- Users acting on AI- generated results

This results in a scenario where the responsibility is distributed, but there is no accountability clearly assigned.

When an AI system produces a flawed or biased decision, an organization cannot pinpoint who is responsible for AI decisions. Is it the engineer designing the model, the manager who made a decision to implement the model, or even the organization as a whole? That is why the gap in AI governance emerges. Without established accountability, organizations have to deal with slow actions, poor governance, and greater risks. To move forward, organizations must shift from shared responsibility to explicit accountability tied to outcomes.

Why Current AI Governance Models Are Not Sufficient

Most companies attempt to manage AI risks by extending existing frameworks, IT governance, data governance, or compliance programmes. While these provide a starting point, they were not designed for the unique challenges AI systems present, and the evidence suggests the gap between intent and execution is significant. According to a 2023 report by the Alan Turing Institute, fewer than one in five organisations with active AI deployments had governance processes specifically designed for AI, as opposed to repurposed IT or data controls. The same report found that in organisations that had experienced a significant AI-related incident, 73% attributed the failure at least in part to governance processes that were not fit for purpose, not to the underlying technology itself. Three structural inadequacies explain why existing models consistently fall short.

The first is the interpretability problem. Many AI decision-making processes are too complex to be easily explained or traced back to their contributing variables — what practitioners call the black box AI problem. This directly undermines AI transparency and accountability, making it difficult to audit decisions after the fact or to identify bias before deployment. Traditional IT governance frameworks were built on the assumption that systems behave predictably and can be tested exhaustively before release. AI systems do not behave this way.

The second is the dynamic change problem. AI systems are not static. They evolve continuously as new data flows in, as models are retrained, and as deployment environments shift. Conventional governance frameworks built on periodic audits and fixed control checkpoints are structurally mismatched to systems that can change their behaviour between audit cycles. The US Government Accountability Office (GAO), in its 2021 framework for AI accountability, specifically identified the inability of static governance models to track model drift as one of the primary failure modes in federal AI deployments a finding that translates directly to enterprise contexts.

The third is the jurisdictional fragmentation problem. AI is deployed globally, but governance expectations vary significantly by region. The EU AI Act, the US Executive Order on AI, the UK's pro-innovation regulatory approach, and China's generative AI regulations each impose different, and sometimes conflicting requirements. Organisations operating across borders cannot rely on a single compliance programme to satisfy all of them. This requires a governance architecture flexible enough to accommodate multiple regulatory regimes simultaneously, something no existing IT or data governance framework was designed to do.

Together, these three inadequacies make clear that extending existing frameworks is not a governance strategy it is a governance deferral.

Algorithm Accountability Failure In Real Life: Amazon’s AI Hiring System

A significant example of how the algorithm fails is Amazon's AI Hiring System. A cautionary case now widely cited in AI ethics literature and referenced by regulatory bodies including the European Commission in early EU AI Act guidance documentation. Beginning around 2014, Amazon developed a machine learning tool designed to streamline hiring by automatically screening and ranking job applicants. The model was trained on a decade of historical hiring data that reflected patterns from a workforce that was predominantly male, particularly in technical roles.

The result was a system that learned to penalise indicators of female identity. Resumes that included the word "women's" were automatically ranked lower. Graduates of all-female institutions were downgraded. The model had not been explicitly told to discriminate, but simply learned that being female correlated with not being hired. This is the black box AI problem in its most consequential form: a system producing discriminatory outputs through processes too complex to easily trace, explain, or reverse.

Despite multiple rounds of engineering intervention, the team could not ensure the model would not continue to find other proxies for gender. Amazon disbanded the project in 2018. What makes this case instructive for AI governance is not just the technical failure but the accountability failure. There was no designated owner of the model's outcomes. There was no framework in place to assess algorithmic bias before deployment. There was no structured audit process to catch discriminatory patterns in production. Had Amazon applied a framework such as the NIST AI Risk Management Framework (AI RMF) which specifically requires bias testing, impact assessment, and human oversight protocols for high-risk AI applications the system's discriminatory behaviour might have been identified and addressed before it was ever used on real candidates.

This case also illustrates why governance cannot be treated as a post-deployment concern. As Dr. Joy Buolamwini, founder of the Algorithmic Justice League and one of the most cited researchers on algorithmic bias, has argued: "The people who are harmed by these systems are often the least likely to know they exist." Governance frameworks must be built in from the start not retrofitted after failure. The regulatory consequences of such failures are escalating. Under the EU AI Act, which came into force in 2024, AI systems used in employment decisions are classified as high-risk, subject to mandatory conformity assessments, bias audits, and human oversight requirements. Non-compliance can result in fines of up to €30 million or 6% of global annual turnover , whichever is higher. This is a significant material risk, not merely a reputational one.

The governance tools now exist and the regulatory expectations are codified. Organisations that deploy AI in consequential decision-making today without structured accountability frameworks are not facing an emerging risk, they are actively ignoring a documented one.

What Responsible AI Governance Looks like

Overcoming the AI governance gap involves much more than principles and guidelines – it requires transparency, clear ownership, and embedded accountability throughout the AI lifecycle. Ultimately, responsible AI governance means shifting the emphasis from control to ownership of the outcomes. This means that accountability will be determined by decision making, rather than the specific algorithms used.

Lifecycle Governance: Controlling AI From Data to Deployment

In practice, this begins with decision-level ownership. In other words, for each AI-enabled process, there should be someone who has ownership over the results, risks, and decision-making process. Equally important is end-to-end lifecycle governance This means implementing controls related to:

- Data acquisition and quality

- Model development and validation

- Deployment and Integration

- Continuous monitoring and revalidation

“In AI systems, responsibility is often shared—but accountability must be owned.”

The Risks of Incomplete Lifecycle Governance

Without full lifecycle governance, issues may emerge long after deployment. Another critical component is AI transparency and auditability. Systems should be designed to:

- decision-making transparency wherever possible

- documentation of inputs and outputs

- audit trail generation for post-incident investigation

- This is crucial for both regulatory and internal purposes.

Building an Effective AI Risk Management Framework

Organizations need to bolster their approach to AI risk management by establishing a robust framework that includes:

categorization of risks in terms of impact and sensitivity human intervention for making critical decisions audits and stress testing of AI solutions. Lastly, the issue of third-party accountability cannot be ignored. With more and more companies utilizing third-party tools to implement AI, governance must extend to outside systems as well. At its core, accountable AI governance is a system of governance and control that needs to be built into decision-making.

When Governance Works: The Mastercard Model

Mastercard offers an instructive counter-example of what proactive, structured AI governance looks like in practice. Facing growing regulatory scrutiny and internal pressure to scale AI-driven fraud detection across multiple jurisdictions, Mastercard did not simply deploy models and monitor for problems. Instead, the company established a dedicated AI governance function embedded within its broader risk management structure, with defined accountability at the model level, meaning each deployed model has a named owner responsible for its performance, fairness, and compliance outcomes.

Critically, Mastercard implemented pre-deployment bias testing and explainability requirements for all models operating in consumer-facing decisions, ahead of regulatory mandates requiring it. When the EU AI Act's high-risk classification criteria were published, Mastercard's existing governance architecture already met the majority of its requirements, not because the company had anticipated that specific regulation, but because it had built governance to a standard rather than to a rule.

The result: when regulators in multiple EU member states began requesting documentation of AI decision-making processes in 2023, Mastercard was able to provide audit trails, model documentation, and human oversight evidence within days rather than months. No enforcement action followed. This is what the Brookings Institution has called the "governance dividend" : organisations that invest in proactive AI accountability frameworks do not just avoid penalties. They convert regulatory pressure into competitive advantage, moving faster and with greater confidence than competitors still operating on ad hoc governance models.

The contrast with Amazon's hiring system could not be more stark. The difference was not technical capability, it was whether accountability was built in from the start or treated as someone else's problem.

Board-Level Accountability: Why AI Governance Must Move from IT to the Boardroom

Ultimately, effectiveness of AI governance can only be achieved through leadership.

Given the rising importance of AI technologies in companies’ strategic decisions, accountability cannot remain confined to technical teams. Instead, it must be elevated to the boardroom and executive level, where risk, strategy, and oversight converge. In turn, boards and top executives have a significant influence on how AI accountability is structured and enforced in organizations, including the following:

- Integrating AI risks within corporate risk management frameworks

- Assigning executive responsibility for AI systems and results

- Ensuring consistency between AI strategies and ethical standards

- Adopting AI-related governance guidelines and frameworks that are more complex than pure compliance-based ones

Additionally, leaders should make sure that companies allocate enough resources, not only for technology acquisition but also for training and building up expertise, collaboration between functions, and fostering an ethical culture of responsible AI. Importantly, governance must be proactive rather than reactive. Waiting for regulatory enforcement or public failures is no longer a viable strategy. Organizations that lead in enterprise AI governance will be those that treat accountability as a strategic priority that shapes decision-making, risk tolerance, and long-term value creation. Read more on Enterprise AI Governance: From IT to Boardroom

Governance Frameworks in Practice: What Organisations Can Actually Use

The gap between governance intent and governance practice is, in part, a tools problem. Organisations frequently operate without structured frameworks, relying instead on ad hoc policies or generic IT compliance procedures that were never designed for the complexity of AI systems. Several credible, widely adopted frameworks now exist to address this directly.

The NIST AI Risk Management Framework (AI RMF), published by the US National Institute of Standards and Technology, provides a structured approach to identifying, measuring, and managing AI-related risks across the full model lifecycle. It is framework-agnostic and sector-neutral, making it applicable across industries from financial services to healthcare. The Brookings Institution has described the NIST AI RMF as "the most actionable governance reference available to US enterprises" and its adoption is increasingly expected by regulators in federal procurement contexts.

For organisations operating within ISO-aligned quality and risk management systems, ISO/IEC 42001:2023 the first international standard specifically designed for AI management systems provides a governance architecture that integrates directly with existing ISO 9001 and ISO 27001 frameworks. It covers AI policy, risk assessment, transparency obligations, and supplier accountability, making it particularly relevant for enterprises with complex AI vendor ecosystems.

At the product and engineering level, Google's People + AI Research (PAIR) guidelines offer a practitioner-focused framework for designing AI systems that are transparent, human-centred, and auditable by design. While originally developed for internal use, PAIR has become a reference standard for product teams embedding responsible AI practices into development workflows.

According to research published by MIT Sloan Management Review in 2023, organisations that adopted a formal AI governance framework reported 35% fewer AI-related compliance incidents and were significantly more likely to detect model performance degradation before it caused material harm. Yet adoption remains low: a McKinsey survey from the same year found that fewer than 30% of companies with active AI deployments had implemented a structured governance framework of any kind.

The frameworks exist. The evidence for their effectiveness is accumulating. The gap is not a knowledge problem, it is a prioritisation problem.

Conclusion: Accountability as the Foundation of Trust in AI

As artificial intelligence continues to scale across industries, the question of accountability will define its long-term impact.

The AI governance gap is not simply a technical challenge—it is a leadership and structural issue that determines whether organizations can safely and responsibly harness the power of AI. Those that fail to address this gap risk more than regulatory consequences. They risk eroding trust, damaging reputation, and undermining the very decisions AI is meant to improve.

Conversely, organizations that establish clear AI accountability, robust governance frameworks, and transparent decision-making processes will gain a critical advantage. They will not only mitigate risk, but also build confidence among customers, stakeholders, and regulators. The future of AI will not be shaped solely by innovation, but by accountability.

Because when algorithms decide, responsibility cannot be diffused, deferred, or denied—it must be clearly defined, actively managed, and owned at the highest levels of the organization.

Frequently Asked Questions

Q1: What is the AI governance gap and why does it matter for businesses?

The AI governance gap refers to the absence of clear accountability structures within organisations deploying AI systems — where responsibility for AI outcomes is distributed across multiple teams, vendors, and functions, but formally owned by none. In practice, this means that when an AI system produces a harmful, biased, or legally non-compliant decision, no single individual, team, or function can be held definitively responsible. This matters for businesses for three compounding reasons.

First, it creates direct regulatory exposure. As AI regulation matures globally — through the EU AI Act, the US Executive Order on AI, and emerging frameworks in the UK and Asia — regulators are no longer accepting "the model decided" as an explanation. They are demanding named accountability, documented oversight, and evidence of human-in-the-loop AI controls. Organisations without these structures face fines, enforcement actions, and licence conditions that can materially affect operations.

Second, it amplifies operational risk. AI systems that are ungoverned can drift — changing their behaviour as new data flows in — without anyone detecting the change until it has already caused harm. According to the IBM Institute for Business Value, only 24% of organisations report that their AI projects are adequately governed, meaning the majority are operating with significant blind spots in their AI risk management.

Third, it erodes stakeholder trust. Customers, investors, and regulators increasingly expect organisations to demonstrate not just that their AI works, but that someone is accountable for how it works. Organisations that cannot answer that question credibly are at a growing disadvantage — in procurement decisions, in investor due diligence, and in public confidence. The AI governance gap is not a future risk. For organisations deploying AI in consequential decisions today, it is a present one.

Q2: How does the EU AI Act change accountability requirements for organisations using AI?

The EU AI Act, which came into force in 2024, represents the most comprehensive binding AI governance framework currently in effect globally. It fundamentally changes the accountability equation for organisations deploying AI in the European Union — and, through its extraterritorial reach, for any organisation whose AI systems affect EU citizens or markets. The Act introduces a risk-based classification system that determines the level of governance required for each AI application: Unacceptable risk systems — such as real-time biometric surveillance in public spaces and social scoring by governments — are prohibited outright.

High-risk systems — including AI used in hiring and recruitment, credit scoring, healthcare decisions, education assessment, and law enforcement — are subject to the most stringent requirements Limited and minimal risk systems — such as chatbots and spam filters — face lighter disclosure and transparency obligations. For organisations operating high-risk AI systems, the EU AI Act mandates several specific accountability requirements that go well beyond existing data protection obligations under GDPR.

Mandatory conformity assessments before deployment, requiring organisations to demonstrate that their AI systems meet defined standards for accuracy, robustness, and bias mitigation — analogous to product safety certification in regulated industries. AI auditability and explainability obligations — organisations must be able to explain how their AI systems reach decisions in terms that affected individuals and regulators can understand. The black box AI problem is not legally permissible for high-risk applications under this framework.

Human-in-the-loop AI oversight requirements — for high-risk decisions, human review must be genuinely available and not merely nominal. Rubber-stamping AI outputs without meaningful human judgment does not satisfy the Act's oversight standard. Continuous monitoring and incident reporting — organisations must monitor deployed AI systems for performance degradation, bias drift, and unexpected behaviour, and report significant incidents to national competent authorities within defined timeframes.

Third-party and vendor accountability — the Act extends governance obligations to the AI supply chain. Organisations cannot outsource accountability by purchasing AI systems from third-party providers; they remain responsible for ensuring that those systems meet the Act's requirements in their specific deployment context. The financial consequences of non-compliance are significant: fines of up to €30 million or 6% of global annual turnover, whichever is higher, for violations involving prohibited AI practices. For high-risk system non-compliance, the ceiling is €15 million or 3% of global turnover. For organisations currently operating without structured AI governance frameworks, the EU AI Act is not a future compliance project. It is an immediate accountability mandate.

Q3: What frameworks can organisations use to build responsible AI governance?

Several credible, internationally recognised frameworks now exist to help organisations build structured AI governance accountability — moving beyond ad hoc policies and repurposed IT controls toward governance architectures specifically designed for the complexity of AI systems.

The NIST AI Risk Management Framework (AI RMF)

Developed by the US National Institute of Standards and Technology, the NIST AI RMF provides a comprehensive, sector-neutral approach to identifying, measuring, and managing AI-related risks across the full model lifecycle — from design and development through deployment, monitoring, and decommissioning. It is structured around four core functions: Govern, Map, Measure, and Manage — each addressing a distinct dimension of AI risk accountability. The framework is voluntary in the United States but is increasingly referenced by federal regulators as the expected standard of practice, and its adoption is growing rapidly in financial services, healthcare, and defence contracting. The Brookings Institution has described it as the most actionable AI governance reference currently available to enterprise organisations.

ISO/IEC 42001:2023 — The International AI Management System Standard

For organisations operating within ISO-aligned quality and risk management systems, ISO/IEC 42001:2023 is the first international standard specifically designed for AI management systems. It provides a governance architecture that integrates directly with existing ISO 9001 (quality management) and ISO 27001 (information security) frameworks — meaning organisations with mature ISO compliance programmes can extend rather than replace their existing governance infrastructure. The standard covers AI policy development, risk assessment methodology, transparency obligations, human oversight requirements, and supplier accountability — making it particularly relevant for enterprises with complex AI vendor ecosystems and multi-jurisdictional operations.

The OECD AI Principles

The OECD AI Principles, adopted by over 40 countries, provide the international policy foundation on which most national AI regulations — including the EU AI Act — are built. For organisations seeking a governance framework that translates across regulatory jurisdictions, aligning to OECD principles ensures that governance practices are compatible with the majority of national frameworks currently in effect or in development. The principles address five key areas: inclusive growth and sustainable development, human-centred values and fairness, transparency and explainability, robustness and security, and accountability.

Google's People + AI Research (PAIR) Guidelines

At the product and engineering level, Google's PAIR guidelines offer a practitioner-focused framework for designing AI systems that are transparent, human-centred, and auditable by design. While originally developed for internal product development, PAIR has become a widely referenced standard for technical teams embedding responsible AI practices into development workflows — particularly for organisations building or customising AI systems rather than simply procuring them.

Choosing the right framework

The most effective approach for most enterprises is not to select a single framework but to layer them: using NIST AI RMF or ISO/IEC 42001 as the governance architecture, OECD principles as the policy foundation, and PAIR guidelines as the engineering implementation standard. Organisations subject to EU AI Act requirements should map their chosen framework against the Act's specific obligations to identify any gaps — particularly around conformity assessment, incident reporting, and third-party accountability. According to research published by MIT Sloan Management Review, organisations that adopted a formal AI governance framework reported 35% fewer AI-related compliance incidents than those operating without one. The frameworks exist. The evidence for their effectiveness is documented. The governance gap, for most organisations, is now a prioritisation problem — not a knowledge one.